Welcome

Hello and welcome to Building Hybrid Instruments.

Links to relevant resources for the course as well as student contributions and discussions will be posted on this blog.

Please begin by viewing the syllabus page.

Hello and welcome to Building Hybrid Instruments.

Links to relevant resources for the course as well as student contributions and discussions will be posted on this blog.

Please begin by viewing the syllabus page.

Construct/ Destruct

discovery, exploration, fear, destruction; of sound

Video Performance

Materials: fabric, contact mics, human hand, shadow

Live performance at Station P

Materials: fabric, contact mics, human hand, shadow, Hamlet on vinyl, turntables

Ephemeral Existence started as a simple investigation into the nostalgic world of analog audio recording. As investigation continued, it became apparent that there is a powerful relationship between humanity and unique physical objects that share the same vulnerabilities. Something becomes more treasured when it is not immediately replaceable. This observation became the central concept of this project, and sought to discover what the temporary nature of our existence reflected in our behaviors.

Epemeral Existence from Dan Russo on Vimeo.

Edison wax cylinders became the focus due to their delicate construction and specific frequency ranges. If tuned properly, it would be able to become a piece of hardware very specific to the content it was to record.

The hardware deploys the use of modern electronics and foil recording technology together, to manifest the experience of our present with our past.

The physical form of the sound waves can be seen as a visual pattern on the surface of the foil above.

SketchHelper is an app that aids in the analog perspective sketching process. The idea is that the artist can use the application to provide on-the-go construction lines. Currently the design of the interface is static and not technically optimal; there is much left to be done in this project and for this reason it will be continued next semester with a lot of surveys, user-testing, and design upgrades based on the advice.

SketchHelper speaks to the growing world of intelligent machine-aids in analog processes. The interest to these kinds of process designs is to create potential for the quick and intuitive methods of making that people are capable of responding to in the living world, in real time, to incorporate efficiency and insight and thus allow the designer to create things, and in ways, that might not have been possible before. By doing the tedious work for the designer and intelligently responding to the designers gestural habits, this application will become embedded into the processes that designers are already familiar with. I envision the work to be analogous to a calculator in the math world, there to make math easier but not to replace the person’s thought process.

The first video shows the app in use at high speed. The second video shows someone who was newly introduced to the application at that instant and providing what I considered to be extremely insightful feedback, and communicates the direction that I intend on going as I continue this project.

SketcHelper from Yeliz Karadayi on Vimeo.

SketcHelper from Yeliz Karadayi on Vimeo.

Some people reacted, some ignored, some got angry, some engaged. From the user/player/attacker perspective, the possibility of gamification of invasion pops up. Aggressive personalized advertisement. The variety of reactions brings up new questions and the project calls for more exploration.

Photos where logged here

Technical

This project was much more of a challenge than I had originally thought. The gears that where printed to turn the pantilt mechanism where very unreliable in the end as they would snag at the teeth sometimes. Also, mapping of space is not trivial, at least not for me.

“Kim vs. Comet” is a real-time comparison of the volume of data created via social media about Kim Kardashian and the Rosetta comet landing.

How do online interactions differ from real world interactions? People often create digital personalities for themselves. These alternate selves make it easier to disassociate the physical self from their digital interactions. This mental distance can lead to uncharacteristic behavior in the digital world.

How does media influence public perception? Kim’s “internet breaking” magazine cover was published about the same time as scientists landed on the Rosetta comet. However, Kim seemed to get most of the media attention. Does that mean people care less about human’s landing on a comet? Or does the media only allow the public to see what it will get the most views and publicity off of?

This piece physicalizes the digital interaction of tweeting thereby forcing people to confront theirs and other’s digital interactions in the real world.

Inside the sculpture, there are two thermal printers, two arduinos and, a laptop running two processing sketches. The processing sketches scrape the most recent tweet from twitter matching the search query for either @KimKardashian or @ESA_Rosetta. The new tweets scraped are then compared to the previous tweet. If it is different it is printed out of one of the thermal printers. If it is the same as the previous tweet the sketch waits a few seconds (to avoid exceeding rate limits) and scrapes again for the most recent tweet of either query. The printed sheets are allowed to hang from the printers as a visual comparison of the volume of data created about either topic.

In the future I would like to improve the piece by consolidating the electronics and code into one processing sketch and a raspberry pi.

‘CMU View from Afar’ is a remote viewing tool for spaces at Carnegie Mellon University.

Why

In our initial research process we spoke with many current CMU students who had taken a campus tour prior to their arrival at school. They all mentioned that the tour took place outside of the buildings on campus, so they were unable to see inside the some of the labs, lecture halls, and eating areas in an adequate fashion. Many international students also mentioned that they were unable to visit CMU for a campus tour before their academic career here began, due to an inability to travel to pittsburgh just for a short visit. With this information, we attempted to create a tool for potential students, or anyone interested for that matter, to view the inside of rooms at CMU without leaving the comfort of their home, wherever that may be.

How

The project aims to provide an ‘inside’ view of Carnegie Mellon’s facilities. Our website, cmuviewfromafar.tumblr.com, provides this experience. It is a three step process:

1. A PDF is downloaded and printed out. This becomes the interface for the tool. (see fig. 1)

2. An instructional video can be watched on the website, explaining how the printed PDF is then folded into a paper box. (See fig. 2)

3. A .apk file, an application for android devices, can be downloaded from the website, and installed on a desired android smart device. (see fig. 3)

Now, when the application is opened a camera view is held over the box and a [glitchy but recognizable] room within Carnegie Mellon University is augmented onto the box, on the screen of the device. Different rooms can be toggled between, using a small menu located on the side of the screen.

In this prototype, three rooms are available within the menu. An art’s Fabrication lab located in Doherty, a standard lecture hall and Zebra Lounge Cafe, located within the School of Art.

Video

Looking Ahead

For future iterations, we hope to incorporate a process for recording 3D video that will allow animated 3D interactions to occur within the box in real time. This is possible using the Kinect and custom made plugin from Quaternion Software.

The Big Picture

CMU View From Afar is a tangible interface for remotely navigating a space, using Augmented Reality technology. Our goal was to make this process as accessible and simple as possible for the user to implement. We set up the website and decided to have a single folded piece of paper to act as the image target because internet access and a printer are the only things needed to begin using this tool. The .apk file can also be continuously updated by us, and downloaded by a user, while the box as an image target remains the same. This can allow for the same box to function as a platform for many methods of information distribution, and all that has to happen is updating the .apk file.

Vox Proprius (source) is an iPhone app that harmonizes with you while you sing. Running on the openFrameworks platform, it uses the ofxiOS addon combined with the ofxPd addon to generate sound and visuals.

All of the extra parts are generated live from your own voice using a pitch shifter in Pd. Songs can be written in any number of composition softwares (I used musescore), and exported as a musicXML file for import and synthesis in the app.

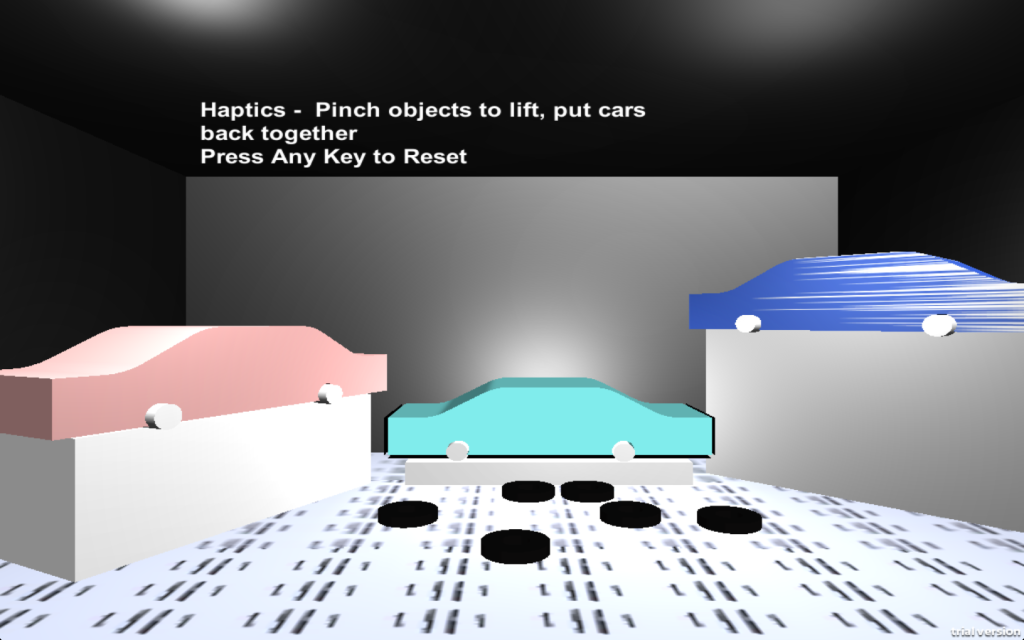

Haptics 3D is a wearable bracelet and Unity Application.The bracelet syncs with Unity to give haptic feedback when a users hand picks up an object in 3D space and connects the object to another part in 3D space. The demo has 3 cars each needing 2 car tires to be connected to the axels. The tool is meant to be a starting point for better understanding virtual reality of 3D objects and 3D space through haptic feedback.

This project uses Leap Motion to detect hand location in 3D space, Unity to 3D model the scene, imports to connect to the Leap Motion, and Uniduino to connect to the Arduino for Haptic Feedback.

Future developments would allow low cost learning to easily access complex systems and how to build them through virtual reality. Students would learn to build mechanical objects with their hands, when they don’t have access to physical components of a mechanical systems because it doesn’t exist anymore or it is too expensive to gain access to.

rehab touch from Meng Shi on Vimeo.

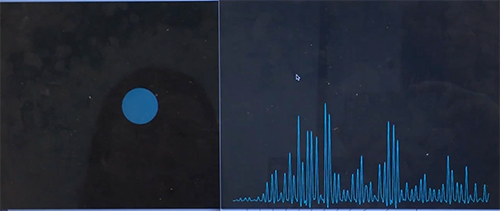

The idea of the final project is to explore some possible way to detect people’s gesture when they touch something.

Background:

Stroke affects the majority survivors’ abilities to live independently and their quality of life.

The rehabilitation in hospital is extremly expensive and cannot be covered by medical insurance.

What we are doing is to provide them possible low cost solution, which is a home-based rehabilitation system.

Touche:

www.disneyresearch.com/project/touche-touch-and-gesture-sensing-for-the-real-world/

Touch and activate:

dl.acm.org/citation.cfm?id=2501989

These two are using different way to realize gesture detection, touche is using capacitor change in the circuit while Touch and Activate is using frequency to detect frequency change.

My explore of these two based on two instructions:

Instructable: www.instructables.com/id/Touche-for-Arduino-Advanced-touch-sensing/

Ali: http://artfab.art.cmu.edu/touch-and-activate/

At first I explored “Touche”, and show it in critique, after final critique, move to “touch and activate”.

In order to realize feedback, I connect Max and Processing to do visualization in processing, since it seems Max is not very good at data visualization part. (I didn’t find good lib to do data visualization in Max, maybe also because my limitation knowledge of Max.)

Limitation of system:

“Touch and Activate” seems not work very well in noising environment even it just use high frequency (like the final demo environment).

The connection between Max and Processing is not very strong. So if I want to do some complicated pattern, the signal is too weak to do that.

In a dark room, words, motions, and even thoughts are amplified.

Echo Chamber places its audience inside a room where the only sound is the sound that they make, cycled through a audio feedback loop; and the only light is light that follows this sound’s pitch and volume.

The piece places its audience unexpectedly in control of this room, and explores how they react. Do they explore its sounds and lights? Do they try to find order in the feedback? Or do they shrink back, afraid that the feedback will grow out of control?